This is the multi-page printable view of this section. Click here to print.

Getting Started

- 1: Quick Start

- 2: Installation

- 2.1: Start the control plane

- 2.2: Register a cluster

- 2.3: Add-on management

- 2.4: Register a cluster via gRPC

- 2.5: Running on EKS

- 3: Add-ons and Integrations

- 3.1: Policy

- 3.1.1: Policy framework

- 3.1.2: Policy API concepts

- 3.1.3: Configuration Policy

- 3.1.4: Open Policy Agent Gatekeeper

- 3.2: Application lifecycle management

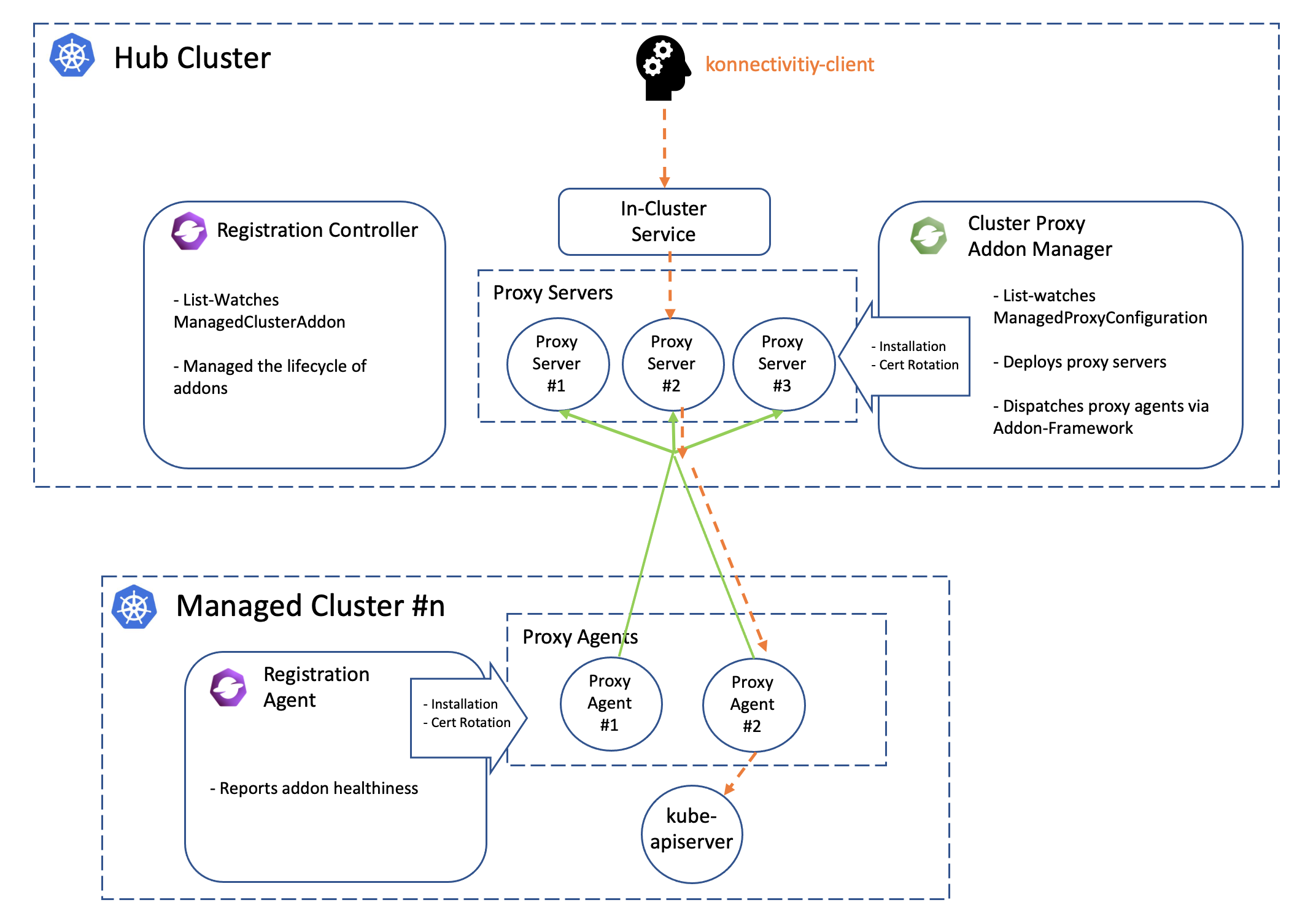

- 3.3: Cluster proxy

- 3.4: Managed service account

- 3.5: Multicluster Control Plane

- 3.6: FleetConfig Controller

- 4: Administration

1 - Quick Start

Follow these steps to setup an OCM hub with two managed clusters using clusteradm and kind.

Prerequisites

- Ensure kubectl and kustomize are installed.

- Ensure kind (greater than

v0.9.0+, or the latest version is preferred) is installed.

Install clusteradm CLI tool

Run the following command to download and install the latest clusteradm command-line tool:

curl -L https://raw.githubusercontent.com/open-cluster-management-io/clusteradm/main/install.sh | bash

Setup hub and managed cluster

Run the following command to quickly set up a hub cluster and 2 managed clusters using kind.

curl -L https://raw.githubusercontent.com/open-cluster-management-io/OCM/main/solutions/setup-dev-environment/local-up.sh | bash

If you want to set up OCM in a production environment or on a different Kubernetes distribution, please refer to the Start the control plane and Register a cluster guides.

Alternatively, you can deploy OCM declaratively using the FleetConfig Controller.

What is next

Now you have the OCM control plane with 2 managed clusters connected! Let’s start your OCM journey.

- Deploy kubernetes resources onto a managed cluster

- Visit kubernetes apiserver of managedcluster from cluster-proxy

- Visit integration to check if any certain OCM addon will meet your use cases.

- Use the OCM VScode Extension to easily generate OCM related Kubernetes resources and track your cluster

2 - Installation

Install the core control plane that includes cluster registration and manifests distribution on the hub cluster.

Install the klusterlet agent on the managed cluster so that it can be registered and managed by the hub cluster.

2.1 - Start the control plane

Prerequisites

- The hub cluster should be

v1.19+. (To run on hub cluster version between [v1.16,v1.18], please manually enable feature gate “V1beta1CSRAPICompatibility”). - Currently the bootstrap process relies on client authentication via CSR. Therefore, if your Kubernetes distributions(like EKS)

don’t support it, you can:

- follow this article to run OCM natively on EKS

- or choose the multicluster-controlplane as the hub controlplane

- Ensure kubectl and kustomize are installed.

Network requirements

Configure your network settings for the hub cluster to allow the following connections.

| Direction | Endpoint | Protocol | Purpose | Used by |

|---|---|---|---|---|

| Inbound | https://{hub-api-server-url}:{port} | TCP | Kubernetes API server of the hub cluster | OCM agents, including the add-on agents, running on the managed clusters |

Install clusteradm CLI tool

It’s recommended to run the following command to download and install the

latest release of the clusteradm command-line tool:

curl -L https://raw.githubusercontent.com/open-cluster-management-io/clusteradm/main/install.sh | bash

You can also install the latest development version (main branch) by running:

# Installing clusteradm to $GOPATH/bin/

GO111MODULE=off go get -u open-cluster-management.io/clusteradm/...

Bootstrap a cluster manager

Before actually installing the OCM components into your clusters, export

the following environment variables in your terminal before running our

command-line tool clusteradm so that it can correctly discriminate the

hub cluster.

# The context name of the clusters in your kubeconfig

export CTX_HUB_CLUSTER=<your hub cluster context>

Call clusteradm init:

# By default, it installs the latest release of the OCM components.

# Use e.g. "--bundle-version=latest" to install latest development builds.

# NOTE: For hub cluster version between v1.16 to v1.19 use the parameter: --use-bootstrap-token

clusteradm init --wait --context ${CTX_HUB_CLUSTER}

Configure CPU and memory resources

You can configure CPU and memory resources for the cluster manager components by adding resource flags to the

clusteradm init command. These flags indicate that all components in the hub controller will use the same resource

requirement or limit:

# Configure resource requests and limits for cluster manager components

clusteradm init \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=1000m,memory=1Gi \

--resource-requests cpu=500m,memory=512Mi \

--wait --context ${CTX_HUB_CLUSTER}

Available resource configuration flags:

--resource-qos-class: Sets the resource QoS class (Default,BestEffort, orResourceRequirement)--resource-limits: Specifies resource limits as key-value pairs (e.g.,cpu=800m,memory=800Mi)--resource-requests: Specifies resource requests as key-value pairs (e.g.,cpu=500m,memory=500Mi)

The clusteradm init command installs the

registration-operator

on the hub cluster, which is responsible for consistently installing

and upgrading a few core components for the OCM environment.

After the init command completes, a generated command is output on the console to

register your managed clusters. An example of the generated command is shown below.

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub kube-apiserver endpoint> \

--wait \

--cluster-name <cluster_name>

It’s recommended to save the command somewhere secure for future use. If it’s lost, you can use

clusteradm get token to get the generated command again.

Important Note on Network Accessibility:

The --hub-apiserver URL in the generated command must be network-accessible from your managed clusters. Consider the following scenarios:

-

Local hub cluster (kind, minikube, etc.): The generated URL will typically be a localhost address (e.g.,

https://127.0.0.1:xxxxx). This URL is only accessible from your local machine and will not work for remote managed clusters hosted on cloud providers (GKE, EKS, AKS, etc.). -

Cloud-hosted managed clusters: If you plan to register managed clusters running on cloud providers (GKE, EKS, AKS, etc.), your hub cluster must be network-accessible from those cloud environments. This means:

- Use a cloud-hosted hub cluster, or

- Set up proper networking (load balancer, VPN, ingress, etc.) to expose your hub API server with a publicly accessible endpoint

-

Local testing (both hub and managed on the same machine): For testing with multiple local clusters (e.g., two kind clusters on the same machine), the localhost URL works when using the

--force-internal-endpoint-lookupflag. See the Register a cluster documentation for details.

For production deployments, it’s recommended to use a hub cluster that provides a stable, network-accessible API server endpoint.

Alternative: Enable gRPC-based registration

By default, OCM uses direct Kubernetes API connections for cluster registration. For enhanced security and isolation, you can optionally enable gRPC-based registration, where managed clusters connect through a gRPC server instead of directly to the hub API server.

To enable gRPC-based registration, see Register a cluster via gRPC for detailed instructions on configuring the ClusterManager and exposing the gRPC server.

Check out the running instances of the control plane

kubectl -n open-cluster-management get pod --context ${CTX_HUB_CLUSTER}

NAME READY STATUS RESTARTS AGE

cluster-manager-695d945d4d-5dn8k 1/1 Running 0 19d

Additionally, to check out the instances of OCM’s hub control plane, run the following command:

kubectl -n open-cluster-management-hub get pod --context ${CTX_HUB_CLUSTER}

NAME READY STATUS RESTARTS AGE

cluster-manager-placement-controller-857f8f7654-x7sfz 1/1 Running 0 19d

cluster-manager-registration-controller-85b6bd784f-jbg8s 1/1 Running 0 19d

cluster-manager-registration-webhook-59c9b89499-n7m2x 1/1 Running 0 19d

cluster-manager-work-webhook-59cf7dc855-shq5p 1/1 Running 0 19d

...

The overall installation information is visible on the clustermanager custom resource:

kubectl get clustermanager cluster-manager -o yaml --context ${CTX_HUB_CLUSTER}

Uninstall the OCM from the control plane

Before uninstalling the OCM components from your clusters, please detach the managed cluster from the control plane.

clusteradm clean --context ${CTX_HUB_CLUSTER}

Check the instances of OCM’s hub control plane are removed.

kubectl -n open-cluster-management-hub get pod --context ${CTX_HUB_CLUSTER}

No resources found in open-cluster-management-hub namespace.

kubectl -n open-cluster-management get pod --context ${CTX_HUB_CLUSTER}

No resources found in open-cluster-management namespace.

Check the clustermanager resource is removed from the control plane.

kubectl get clustermanager --context ${CTX_HUB_CLUSTER}

error: the server doesn't have a resource type "clustermanager"

2.2 - Register a cluster

After the cluster manager is installed on the hub cluster, you need to install the klusterlet agent on another cluster so that it can be registered and managed by the hub cluster.

Prerequisites

Network requirements

Configure your network settings for the managed clusters to allow the following connections.

| Direction | Endpoint | Protocol | Purpose | Used by |

|---|---|---|---|---|

| Outbound | https://{hub-api-server-url}:{port} | TCP | Kubernetes API server of the hub cluster | OCM agents, including the add-on agents, running on the managed clusters |

To use a proxy, please make sure the proxy server is well configured to allow the above connections and the proxy server is reachable for the managed clusters. See Register a cluster to hub through proxy server for more details.

Install clusteradm CLI tool

It’s recommended to run the following command to download and install the

latest release of the clusteradm command-line tool:

curl -L https://raw.githubusercontent.com/open-cluster-management-io/clusteradm/main/install.sh | bash

You can also install the latest development version (main branch) by running:

# Installing clusteradm to $GOPATH/bin/

GO111MODULE=off go get -u open-cluster-management.io/clusteradm/...

Bootstrap a klusterlet

Before actually installing the OCM components into your clusters, export

the following environment variables in your terminal before running our

command-line tool clusteradm so that it can correctly discriminate the managed cluster:

# The context name of the clusters in your kubeconfig

export CTX_HUB_CLUSTER=<your hub cluster context>

export CTX_MANAGED_CLUSTER=<your managed cluster context>

Copy the previously generated command – clusteradm join, and add the arguments respectively based

on your deployment scenario.

NOTE: If there is no configmap kube-root-ca.crt in kube-public namespace of the hub cluster,

the flag –ca-file should be set to provide a valid hub ca file to help set

up the external client.

Understanding deployment scenarios

Before running the clusteradm join command, understand your deployment scenario:

-

Both hub and managed clusters are local (e.g., kind): Use

--force-internal-endpoint-lookupflag. Both clusters must be able to reach each other over the network (typically when running on the same machine). -

Managed cluster is on a cloud provider (GKE, AKS, etc.): The

--hub-apiserverURL must be a network-accessible endpoint that the cloud-hosted managed cluster can reach. A localhost URL (e.g.,https://127.0.0.1:xxxxx) from a local kind hub cluster will not work. Your hub cluster must be network-accessible from the cloud environment. -

EKS clusters: AWS EKS requires special handling with registration drivers (grpc or awsirsa) because EKS doesn’t support CSR API by default. See the Running on EKS guide.

# Both hub and managed clusters running locally (e.g., two kind clusters on the same machine)

# Use --force-internal-endpoint-lookup to allow internal endpoint resolution

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--force-internal-endpoint-lookup \

--context ${CTX_MANAGED_CLUSTER}

# Managed cluster on GKE, AKS, or other standard Kubernetes cloud provider

# Hub cluster must have a network-accessible API server endpoint

# Do NOT use --force-internal-endpoint-lookup

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \ # Must be accessible from the cloud

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--context ${CTX_MANAGED_CLUSTER}

Important: If your hub cluster is a local kind cluster, the managed cluster will not be able to reach the localhost API server URL. You must use a hub cluster that is network-accessible from your cloud environment, or set up appropriate networking (load balancer, VPN, ingress, etc.) to expose your hub API server.

AWS EKS clusters require special registration drivers (grpc or awsirsa) because EKS doesn’t support the CSR API by default.

Please follow the Running on EKS guide for detailed instructions on registering EKS clusters.

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--context ${CTX_MANAGED_CLUSTER}

Bootstrap the hub cluster as a klusterlet (Optional)

In addition to registering external clusters, the hub cluster can register itself as a managed cluster by installing the klusterlet components (registration agent and work agent) on the hub. This allows the hub to be managed like any other cluster in your OCM environment.

To bootstrap the hub cluster as a klusterlet, run the following clusteradm join command with hub as context:

# Register the hub cluster as its own managed cluster

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "local-cluster" \ # Common name for hub as klusterlet

--force-internal-endpoint-lookup \

--context ${CTX_HUB_CLUSTER}

# Register the hub cluster as its own managed cluster

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \ # Must be accessible from the cloud

--wait \

--cluster-name "local-cluster" \ # Common name for hub as klusterlet

--context ${CTX_HUB_CLUSTER}

# Register the hub cluster as its own managed cluster

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "local-cluster" \ # Common name for hub as klusterlet

--context ${CTX_HUB_CLUSTER}

Note: The klusterlet components will run alongside the hub components in the same cluster. Ensure your hub cluster has sufficient resources to accommodate both hub and agent workloads.

Configure CPU and memory resources

You can configure CPU and memory resources for the klusterlet agent components by adding resource flags to the clusteradm join command. These flags indicate that all components in the klusterlet agent will use the same resource requirement or limit:

# Configure resource requests and limits for klusterlet components

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=800m,memory=800Mi \

--resource-requests cpu=400m,memory=400Mi \

--force-internal-endpoint-lookup \

--context ${CTX_MANAGED_CLUSTER}

# Configure resource requests and limits for klusterlet components

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=800m,memory=800Mi \

--resource-requests cpu=400m,memory=400Mi \

--context ${CTX_MANAGED_CLUSTER}

# Configure resource requests and limits for klusterlet components

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=800m,memory=800Mi \

--resource-requests cpu=400m,memory=400Mi \

--context ${CTX_MANAGED_CLUSTER}

Available resource configuration flags:

--resource-qos-class: Sets the resource QoS class (Default,BestEffort, orResourceRequirement)--resource-limits: Specifies resource limits as key-value pairs (e.g.,cpu=800m,memory=800Mi)--resource-requests: Specifies resource requests as key-value pairs (e.g.,cpu=500m,memory=500Mi)

Bootstrap a klusterlet in hosted mode (Optional)

Using the above command, the klusterlet components(registration-agent and work-agent) will be deployed on the managed cluster, it is mandatory to expose the hub cluster to the managed cluster. We provide an option for running the klusterlet components outside the managed cluster, for example, on the hub cluster(hosted mode).

The hosted mode deployment is still in experimental stage, consider using it only when:

- you want to reduce the footprint of the managed cluster.

- you do not want to expose the hub cluster to the managed cluster directly

In hosted mode, the cluster where the klusterlet is running is called the hosting cluster. Running the following command to the hosting cluster to register the managed cluster to the hub.

# NOTE for KinD clusters (both hub and managed are kind on the same machine):

# 1. hub is KinD, use the parameter: --force-internal-endpoint-lookup

# 2. managed is Kind, --managed-cluster-kubeconfig should be internal: `kind get kubeconfig --name managed --internal`

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--mode hosted \

--managed-cluster-kubeconfig <your managed cluster kubeconfig> \ # Should be an internal kubeconfig

--force-internal-endpoint-lookup \

--context <your hosting cluster context>

# For cloud-hosted managed clusters

# Hub cluster must have a network-accessible API server endpoint

# Do NOT use --force-internal-endpoint-lookup

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \ # Must be accessible from the cloud

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--mode hosted \

--managed-cluster-kubeconfig <your managed cluster kubeconfig> \

--context <your hosting cluster context>

AWS EKS clusters require special registration drivers. See the Running on EKS guide.

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--mode hosted \

--managed-cluster-kubeconfig <your managed cluster kubeconfig> \

--context <your hosting cluster context>

Resource configuration in hosted mode:

You can also configure CPU and memory resources when using hosted mode by adding the same resource flags:

# Configure resource requests and limits for klusterlet components in hosted mode

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--mode hosted \

--managed-cluster-kubeconfig <your managed cluster kubeconfig> \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=800m,memory=800Mi \

--resource-requests cpu=400m,memory=400Mi \

--force-internal-endpoint-lookup \

--context <your hosting cluster context>

# Configure resource requests and limits for klusterlet components in hosted mode

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--mode hosted \

--managed-cluster-kubeconfig <your managed cluster kubeconfig> \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=800m,memory=800Mi \

--resource-requests cpu=400m,memory=400Mi \

--context <your hosting cluster context>

# Configure resource requests and limits for klusterlet components in hosted mode

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--mode hosted \

--managed-cluster-kubeconfig <your managed cluster kubeconfig> \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=800m,memory=800Mi \

--resource-requests cpu=400m,memory=400Mi \

--context <your hosting cluster context>

Bootstrap a klusterlet in singleton mode

To reduce the footprint of agent in the managed cluster, singleton mode is introduced since v0.12.0.

In the singleton mode, the work and registration agent will be run as a single pod in the managed

cluster.

Note: to run klusterlet in singleton mode, you must have a clusteradm version equal or higher than

v0.12.0

# Both hub and managed clusters running locally

# Use --force-internal-endpoint-lookup

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--singleton \

--force-internal-endpoint-lookup \

--context ${CTX_MANAGED_CLUSTER}

# Managed cluster on cloud provider

# Hub must be network-accessible, do NOT use --force-internal-endpoint-lookup

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--singleton \

--context ${CTX_MANAGED_CLUSTER}

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \ # Or other arbitrary unique name

--singleton \

--context ${CTX_MANAGED_CLUSTER}

Resource configuration in singleton mode:

You can also configure CPU and memory resources when using singleton mode:

# Configure resource requests and limits for klusterlet components in singleton mode

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--singleton \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=600m,memory=600Mi \

--resource-requests cpu=300m,memory=300Mi \

--force-internal-endpoint-lookup \

--context ${CTX_MANAGED_CLUSTER}

# Configure resource requests and limits for klusterlet components in singleton mode

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--singleton \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=600m,memory=600Mi \

--resource-requests cpu=300m,memory=300Mi \

--context ${CTX_MANAGED_CLUSTER}

# Configure resource requests and limits for klusterlet components in singleton mode

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub cluster endpoint> \

--wait \

--cluster-name "cluster1" \

--singleton \

--resource-qos-class ResourceRequirement \

--resource-limits cpu=600m,memory=600Mi \

--resource-requests cpu=300m,memory=300Mi \

--context ${CTX_MANAGED_CLUSTER}

Accept the join request and verify

After the OCM agent is running on your managed cluster, it will be sending a “handshake” to your hub cluster and waiting for an approval from the hub cluster admin. In this section, we will walk through accepting the registration requests from the perspective of an OCM’s hub admin.

-

Wait for the creation of the CSR object which will be created by your managed clusters’ OCM agents on the hub cluster:

kubectl get csr -w --context ${CTX_HUB_CLUSTER} | grep cluster1 # or the previously chosen cluster nameAn example of a pending CSR request is shown below:

cluster1-tqcjj 33s kubernetes.io/kube-apiserver-client system:serviceaccount:open-cluster-management:cluster-bootstrap Pending -

Accept the join request using the

clusteradmtool:clusteradm accept --clusters cluster1 --context ${CTX_HUB_CLUSTER}After running the

acceptcommand, the CSR from your managed cluster named “cluster1” will be approved. Additionally, it will instruct the OCM hub control plane to setup related objects (such as a namespace named “cluster1” in the hub cluster) and RBAC permissions automatically. -

Verify the installation of the OCM agents on your managed cluster by running:

kubectl -n open-cluster-management-agent get pod --context ${CTX_MANAGED_CLUSTER} NAME READY STATUS RESTARTS AGE klusterlet-registration-agent-598fd79988-jxx7n 1/1 Running 0 19d klusterlet-work-agent-7d47f4b5c5-dnkqw 1/1 Running 0 19d -

Verify that the

cluster1ManagedClusterobject was created successfully by running:kubectl get managedcluster --context ${CTX_HUB_CLUSTER}Then you should get a result that resembles the following:

NAME HUB ACCEPTED MANAGED CLUSTER URLS JOINED AVAILABLE AGE cluster1 true <your endpoint> True True 5m23s

If the managed cluster status is not true, refer to Troubleshooting to debug on your cluster.

Apply a Manifestwork

After the managed cluster is registered, test that you can deploy a pod to the managed cluster from the hub cluster. Create a manifest-work.yaml as shown in this example:

apiVersion: work.open-cluster-management.io/v1

kind: ManifestWork

metadata:

name: mw-01

namespace: ${MANAGED_CLUSTER_NAME}

spec:

workload:

manifests:

- apiVersion: v1

kind: Pod

metadata:

name: hello

namespace: default

spec:

containers:

- name: hello

image: busybox

command: ["sh", "-c", 'echo "Hello, Kubernetes!" && sleep 3600']

restartPolicy: OnFailure

Apply the yaml file to the hub cluster.

kubectl apply -f manifest-work.yaml --context ${CTX_HUB_CLUSTER}

Verify that the manifestwork resource was applied to the hub.

kubectl -n ${MANAGED_CLUSTER_NAME} get manifestwork/mw-01 --context ${CTX_HUB_CLUSTER} -o yaml

Check on the managed cluster and see the hello Pod has been deployed from the hub cluster.

$ kubectl -n default get pod --context ${CTX_MANAGED_CLUSTER}

NAME READY STATUS RESTARTS AGE

hello 1/1 Running 0 108s

Troubleshooting

-

If the managed cluster status is not true.

For example, the result below is shown when checking managedcluster.

$ kubectl get managedcluster --context ${CTX_HUB_CLUSTER} NAME HUB ACCEPTED MANAGED CLUSTER URLS JOINED AVAILABLE AGE ${MANAGED_CLUSTER_NAME} true https://localhost Unknown 46mThere are many reasons for this problem. You can use the commands below to get more debug info. If the provided info doesn’t help, please log an issue to us.

On the hub cluster, check the managedcluster status.

kubectl get managedcluster ${MANAGED_CLUSTER_NAME} --context ${CTX_HUB_CLUSTER} -o yamlOn the hub cluster, check the lease status.

kubectl get lease -n ${MANAGED_CLUSTER_NAME} --context ${CTX_HUB_CLUSTER}On the managed cluster, check the klusterlet status.

kubectl get klusterlet -o yaml --context ${CTX_MANAGED_CLUSTER}

Detach the cluster from hub

Remove the resources generated when registering with the hub cluster.

clusteradm unjoin --cluster-name "cluster1" --context ${CTX_MANAGED_CLUSTER}

Check the installation of the OCM agent is removed from the managed cluster.

kubectl -n open-cluster-management-agent get pod --context ${CTX_MANAGED_CLUSTER}

No resources found in open-cluster-management-agent namespace.

Check the klusterlet is removed from the managed cluster.

kubectl get klusterlet --context ${CTX_MANAGED_CLUSTER}

error: the server doesn't have a resource type "klusterlet

Resource cleanup when the managed cluster is deleted

When a user deletes the managedCluster resource, all associated resources within the cluster namespace must also be removed. This includes managedClusterAddons, manifestWorks, and the roleBindings for the klusterlet agent. Resource cleanup follows a specific sequence to prevent resources from being stuck in a terminating state:

- managedClusterAddons are deleted first.

- manifestWorks are removed subsequently after all managedClusterAddons are deleted.

- For the same resource as managedClusterAddon or manifestWork, custom deletion ordering can be defined using the

open-cluster-management.io/cleanup-priorityannotation:- Priority values range from 0 to 100 (lower values execute first).

The open-cluster-management.io/cleanup-priority annotation controls deletion order when resource instances have dependencies. For example:

A manifestWork that applies a CRD and operator should be deleted after a manifestWork that creates a CR instance, allowing the operator to perform cleanup after the CR is removed.

The ResourceCleanup featureGate for cluster registration on the Hub cluster enables automatic cleanup of managedClusterAddons and manifestWorks within the cluster namespace after cluster unjoining.

Version Compatibility:

- The

ResourceCleanupfeatureGate was introduced in OCM v0.13.0, and was disabled by default in OCM v0.16.0 and earlier versions. To activate it, you need to modify the clusterManager CR configuration:

registrationConfiguration:

featureGates:

- feature: ResourceCleanup

mode: Enable

- Starting with OCM v0.17.0, the

ResourceCleanupfeatureGate has been upgraded from Alpha to Beta status and is enabled by default.

Disabling the Feature: To deactivate this functionality, update the clusterManager CR on the hub cluster:

registrationConfiguration:

featureGates:

- feature: ResourceCleanup

mode: Disable

2.3 - Add-on management

API Version Note: OCM addon APIs support both v1alpha1 (stored version) and v1beta1. Both versions are automatically converted by Kubernetes. This documentation uses v1alpha1. For API version details, see Enhancement: Addon API v1beta1.

Add-on enablement

From a user’s perspective, to install the addon to the hub cluster the hub admin

should register a globally-unique ClusterManagementAddon resource as a singleton

placeholder in the hub cluster. For instance, the helloworld

add-on can be registered to the hub cluster by creating:

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ClusterManagementAddOn

metadata:

name: helloworld

spec:

addOnMeta:

displayName: helloworld

Enable the add-on manually

The addon manager running on the hub is taking responsibility of configuring the

installation of addon agents for each managed cluster. When a user wants to enable

the add-on for a certain managed cluster, the user should create a

ManagedClusterAddOn resource on the cluster namespace. The name of the

ManagedClusterAddOn should be the same name of the corresponding

ClusterManagementAddon. For instance, the following example enables helloworld

add-on in “cluster1”:

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ManagedClusterAddOn

metadata:

name: helloworld

namespace: cluster1

spec:

installNamespace: helloworld

Enable the add-on automatically

If the addon is developed with automatic installation,

which support auto-install by cluster discovery,

then the ManagedClusterAddOn will be created for all managed cluster namespaces

automatically, or be created for the selected managed cluster namespaces automatically.

Enable the add-on by install strategy

If the addon is developed following the guidelines mentioned in managing the add-on agent lifecycle by addon-manager,

the user can define an installStrategy in the ClusterManagementAddOn

to specify on which clusters the ManagedClusterAddOn should be enabled. Details see install strategy.

Add-on healthiness

The healthiness of the addon instances are visible when we list the addons via kubectl:

$ kubectl get managedclusteraddon -A

NAMESPACE NAME AVAILABLE DEGRADED PROGRESSING

<cluster> <addon> True

The addon agent are expected to report its healthiness periodically as long as it’s running. Also the versioning of the addon agent can be reflected in the resources optionally so that we can control the upgrading the agents progressively.

Clean the add-ons

Last but not least, a neat uninstallation of the addon is also supported by simply

deleting the corresponding ClusterManagementAddon resource from the hub cluster

which is the “root” of the whole addon. The OCM platform will automatically sanitize

the hub cluster for you after the uninstalling by removing all the components either

in the hub cluster or in the manage clusters.

Add-on lifecycle management

Install strategy

InstallStrategy represents that related ManagedClusterAddOns should be installed

on certain clusters. For example, the following example enables the helloworld

add-on on clusters with the aws label.

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ClusterManagementAddOn

metadata:

name: helloworld

annotations:

addon.open-cluster-management.io/lifecycle: "addon-manager"

spec:

addOnMeta:

displayName: helloworld

installStrategy:

type: Placements

placements:

- name: placement-aws

namespace: default

apiVersion: cluster.open-cluster-management.io/v1beta1

kind: Placement

metadata:

name: placement-aws

namespace: default

spec:

predicates:

- requiredClusterSelector:

claimSelector:

matchExpressions:

- key: platform.open-cluster-management.io

operator: In

values:

- aws

Rollout strategy

With the rollout strategy defined in the ClusterManagementAddOn API, users can

control the upgrade behavior of the addon when there are changes in the

configurations.

For example, if the add-on user updates the “deploy-config” and wants to apply the change to the add-ons to a “canary” decision group first. If all the add-on upgrade successfully, then upgrade the rest of clusters progressively per cluster at a rate of 25%. The rollout strategy can be defined as follows:

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ClusterManagementAddOn

metadata:

name: helloworld

annotations:

addon.open-cluster-management.io/lifecycle: "addon-manager"

spec:

addOnMeta:

displayName: helloworld

installStrategy:

type: Placements

placements:

- name: placement-aws

namespace: default

configs:

- group: addon.open-cluster-management.io

resource: addondeploymentconfigs

name: deploy-config

namespace: open-cluster-management

rolloutStrategy:

type: Progressive

progressive:

mandatoryDecisionGroups:

- groupName: "prod-canary-west"

- groupName: "prod-canary-east"

maxConcurrency: 25%

minSuccessTime: 5m

progressDeadline: 10m

maxFailures: 2

In the above example with type Progressive, once user updates the “deploy-config”, controller

will rollout on the clusters in mandatoryDecisionGroups first, then rollout on the other

clusters with the rate defined in maxConcurrency.

minSuccessTimeis a “soak” time, means the controller will wait for 5 minutes when a cluster reach a successful state andmaxFailuresisn’t breached. If, after this 5 minutes interval, the workload status remains successful, the rollout progresses to the next.progressDeadlinemeans the controller will wait for a maximum of 10 minutes for the workload to reach a successful state. If, the workload fails to achieve success within 10 minutes, the controller stops waiting, marking the workload as “timeout,” and includes it in the count ofmaxFailures.maxFailuresmeans the controller can tolerate update to 2 clusters with failed status, oncemaxFailuresis breached, the rollout will stop.

Currently add-on supports 3 types of rolloutStrategy,

they are All, Progressive and ProgressivePerGroup, for more info regards the rollout strategies

check the Rollout Strategy document.

Add-on configurations

Default configurations

In ClusterManagementAddOn, spec.supportedConfigs is a list of configuration

types supported by the add-on. defaultConfig represents the namespace and name of

the default add-on configuration. In scenarios where all add-ons have the same

configuration. Only one configuration of the same group and resource can be specified

in the defaultConfig.

In the example below, add-ons on all the clusters will use “default-deploy-config” and “default-addon-template”.

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ClusterManagementAddOn

metadata:

name: helloworld

annotations:

addon.open-cluster-management.io/lifecycle: "addon-manager"

spec:

addOnMeta:

displayName: helloworld

supportedConfigs:

- defaultConfig:

name: default-deploy-config

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addondeploymentconfigs

- defaultConfig:

name: default-addon-template

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addontemplates

Note on namespace configuration: When using AddOnDeploymentConfig with addon templates, if you want to preserve the namespace defined in your AddOnTemplate, you must explicitly set agentInstallNamespace: "" in the AddOnDeploymentConfig. Otherwise, the default namespace open-cluster-management-agent-addon will be used. See Namespace configuration with AddOnDeploymentConfig for details.

Configurations per install strategy

In ClusterManagementAddOn, spec.installStrategy.placements[].configs lists the

configuration of ManagedClusterAddon during installation for a group of clusters.

For the need to use multiple configurations with the same group and resource can be defined

in this field since OCM v0.15.0. If Default configurations is defined,

it will override the Default configurations on certain clusters by group and resource.

In the example below, add-ons on clusters selected by Placement will

use “override-deploy-config” and “override-addon-template”, while all the other add-ons

will still use “default-deploy-config” and “default-addon-template”.

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ClusterManagementAddOn

metadata:

name: helloworld

annotations:

addon.open-cluster-management.io/lifecycle: "addon-manager"

spec:

addOnMeta:

displayName: helloworld

supportedConfigs:

- defaultConfig:

name: default-deploy-config

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addondeploymentconfigs

- defaultConfig:

name: default-addon-template

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addontemplates

installStrategy:

type: Placements

placements:

- name: <placement-name>

namespace: <placement-namespace>

configs:

- name: override-deploy-config

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addondeploymentconfigs

- name: override-addon-template

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addontemplates

Below are some recommended Placement for the install strategy:

-

To apply the same configuration across all

ManagedCluster, use Default configurations. -

To apply the configuration to the hub cluster, it must have a specific identifying label or claim, such as local-cluster: “true”, for example:

apiVersion: cluster.open-cluster-management.io/v1beta1

kind: Placement

metadata:

name: <placement-name>

namespace: <placement-namespace>

spec:

predicates:

- requiredClusterSelector:

labelSelector:

matchLabels:

local-cluster: "true"

- To apply the configuration to the spoke clusters, use the following

Placement:

apiVersion: cluster.open-cluster-management.io/v1beta1

kind: Placement

metadata:

name: <placement-name>

namespace: <placement-namespace>

spec:

predicates:

- requiredClusterSelector:

labelSelector:

matchExpressions:

- key: local-cluster

operator: NotIn

values:

- "true"

# Uncomment the following to install even when clusters are unreachable or unavailable.

# tolerations:

# - key: cluster.open-cluster-management.io/unreachable

# operator: Equal

# - key: cluster.open-cluster-management.io/unavailable

# operator: Equal

-

To apply the configuration to a specific set of clusters, see Placement for more options.

-

To apply the configuration to a single specific cluster, see the next section Configurations per cluster.

Configurations per cluster

In ManagedClusterAddOn, spec.configs is a list of add-on configurations.

In scenarios where the current add-on has its own configurations. It also supports

defining multiple configurations with the same group and resource since OCM v0.15.0.

It will override the Default configurations and

Configurations per install strategy defined

in ClusterManagementAddOn by group and resource.

In the below example, add-on on cluster1 will use “cluster1-deploy-config” and “cluster1-addon-template”.

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ManagedClusterAddOn

metadata:

name: helloworld

namespace: cluster1

spec:

configs:

- name: cluster1-deploy-config

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addondeploymentconfigs

- name: cluster1-addon-template

namespace: open-cluster-management

group: addon.open-cluster-management.io

resource: addontemplates

Supported configurations

Supported configurations is a list of configuration types that are allowed to override

the add-on configurations defined in ClusterManagementAddOn spec. They are listed in the

ManagedClusterAddon status.supportedConfigs, for example:

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ManagedClusterAddOn

metadata:

name: helloworld

namespace: cluster1

spec:

...

status:

...

supportedConfigs:

- group: addon.open-cluster-management.io

resource: addondeploymentconfigs

- group: addon.open-cluster-management.io

resource: addontemplates

Effective configurations

As the above described, there are 3 places to define the add-on configurations,

they have an override order and eventually only one takes effect. The final effective

configurations are listed in the ManagedClusterAddOn status.configReferences.

desiredConfigrecord the desired config and it’s spec hash.lastAppliedConfigrecord the config when the corresponding ManifestWork is applied successfully.

For example:

apiVersion: addon.open-cluster-management.io/v1alpha1

kind: ManagedClusterAddOn

metadata:

name: helloworld

namespace: cluster1

...

status:

...

configReferences:

- desiredConfig:

name: cluster1-addon-template

namespace: open-cluster-management

specHash: dcf88f5b11bd191ed2f886675f967684da8b5bcbe6902458f672277d469e2044

group: addon.open-cluster-management.io

lastAppliedConfig:

name: cluster1-addon-template

namespace: open-cluster-management

specHash: dcf88f5b11bd191ed2f886675f967684da8b5bcbe6902458f672277d469e2044

lastObservedGeneration: 1

name: cluster1-addon-template

resource: addontemplates

2.4 - Register a cluster via gRPC

gRPC-based registration provides an alternative connection mechanism for managed clusters to register with the hub cluster. Instead of each klusterlet agent connecting directly to the hub’s Kubernetes API server, agents communicate through a gRPC server deployed on the hub. This approach offers better isolation and reduces the exposure of the hub API server to managed clusters.

Overview

In the traditional registration model, each managed cluster’s klusterlet agent requires a hub-kubeconfig with limited permissions to connect directly to the hub API server. The gRPC-based registration introduces a gRPC server on the hub that acts as an intermediary, providing the same registration process while changing only the connection mechanism.

Benefits

- Better isolation: Managed cluster agents do not connect directly to the hub API server

- Reduced hub API server exposure: Only the gRPC server needs to be accessible to managed clusters

- Maintained compatibility: Uses the same underlying APIs (ManagedCluster, ManifestWork, etc.)

- Enhanced security: Provides an additional layer of abstraction between managed clusters and the hub control plane

Architecture

The gRPC-based registration consists of:

- gRPC Server: Deployed on the hub cluster, exposes gRPC endpoints for cluster registration

- gRPC Driver: Implemented in both the klusterlet agent and hub components to handle gRPC communication

- CloudEvents Protocol: Used for resource management operations (ManagedCluster, ManifestWork, CSR, Lease, Events, ManagedClusterAddOn)

Prerequisites

- OCM v1.2.0 or later (when gRPC support was introduced)

- Hub cluster with cluster manager installed

- Network connectivity from managed clusters to the hub’s gRPC server endpoint

- Ensure kubectl, kustomize, and clusteradm are installed

- A method to expose the gRPC server (using LoadBalancer service)

Network requirements

Configure your network settings for the managed clusters to allow the following connections:

| Direction | Endpoint | Protocol | Purpose | Used by |

|---|---|---|---|---|

| Outbound | https://{hub-grpc-server-url} | TCP | gRPC server endpoint on the hub cluster | OCM agents on the managed clusters |

Deploy the gRPC server on the hub

Step 1: Configure the ClusterManager with gRPC support

You can configure the ClusterManager with gRPC support using either clusteradm or by directly editing the ClusterManager resource.

Option 1: Using clusteradm (recommended)

Initialize the hub cluster with gRPC support using clusteradm:

clusteradm init --registration-drivers="csr,grpc" --grpc-endpoint-type loadBalancer --bundle-version=latest --context ${CTX_HUB_CLUSTER}

This command will:

- Configure the ClusterManager with both CSR and gRPC registration drivers

- Set up the gRPC server with LoadBalancer endpoint type

- Automatically create and configure the LoadBalancer service

After initialization, you can skip to Step 3: Verify the gRPC server deployment.

Option 2: Manual ClusterManager configuration

To enable gRPC-based registration manually, you need to configure the ClusterManager resource with the following:

- Add

grpcto theregistrationDriversfield underregistrationConfiguration - Configure the

serverConfigurationsection with endpoint exposure

Here’s a complete example ClusterManager configuration using LoadBalancer type:

apiVersion: operator.open-cluster-management.io/v1

kind: ClusterManager

metadata:

name: cluster-manager

spec:

# Registration configuration with gRPC driver

registrationConfiguration:

# Add gRPC to the list of registration drivers

registrationDrivers:

- authType: grpc

grpc:

# Optional: list of auto-approved identities

autoApprovedIdentities: []

# Server configuration for the gRPC server

serverConfiguration:

# Optional: specify custom image for the server

# imagePullSpec: quay.io/open-cluster-management/registration:latest

# Endpoint exposure configuration

# This section configures how the gRPC server endpoint is exposed to managed clusters

endpointsExposure:

- protocol: grpc

# Usage indicates this endpoint is for agent to hub communication

usage: agentToHub

grpc:

type: loadBalancer

# Note: When using loadBalancer type, the cluster-manager operator

# will automatically create and manage the LoadBalancer service

# Note: Custom CA bundle is not currently supported

# Certificates are automatically generated by the cluster manager

Apply the configuration:

kubectl apply -f clustermanager.yaml --context ${CTX_HUB_CLUSTER}

Step 2: Get the LoadBalancer endpoint

When using type: loadBalancer in the gRPC configuration, the cluster-manager operator automatically creates and manages a LoadBalancer service named cluster-manager-grpc-server. You don’t need to create it manually.

Get the external IP or hostname of the automatically created LoadBalancer:

# For most cloud providers (GCP, Azure, etc.)

kubectl get svc cluster-manager-grpc-server -n open-cluster-management-hub -o jsonpath='{.status.loadBalancer.ingress[0].ip}' --context ${CTX_HUB_CLUSTER}

# For AWS EKS (returns hostname instead of IP)

kubectl get svc cluster-manager-grpc-server -n open-cluster-management-hub -o jsonpath='{.status.loadBalancer.ingress[0].hostname}' --context ${CTX_HUB_CLUSTER}

Make note of this IP or hostname - you’ll use it when registering managed clusters.

Step 3: Verify the gRPC server deployment

Check that the gRPC server is running on the hub cluster:

kubectl get pods -n open-cluster-management-hub --context ${CTX_HUB_CLUSTER}

You should see a pod named similar to cluster-manager-grpc-server-* in running state.

Check the gRPC server service:

kubectl get svc -n open-cluster-management-hub cluster-manager-grpc-server --context ${CTX_HUB_CLUSTER}

Verify the LoadBalancer is created and has an external IP:

kubectl get svc cluster-manager-grpc-server -n open-cluster-management-hub --context ${CTX_HUB_CLUSTER}

Test connectivity to the gRPC endpoint:

# Test DNS resolution and HTTPS connectivity

curl -v -k https://hub-grpc.example.com

Register a managed cluster using gRPC

Step 1: Generate the join token

Generate the join token on the hub cluster:

clusteradm get token --context ${CTX_HUB_CLUSTER}

This will output a command similar to:

clusteradm join --hub-token <token> --hub-apiserver <hub-api-url> --cluster-name <cluster-name>

Step 2: Bootstrap the klusterlet

You can bootstrap the klusterlet using one of two approaches:

Option A: Using clusteradm with gRPC flags (recommended)

This approach configures gRPC during the initial join, eliminating the need for manual klusterlet configuration.

First, get the gRPC server endpoint and CA certificate from the hub:

# Get the LoadBalancer hostname/IP

# For GCP, use: .status.loadBalancer.ingress[0].ip

grpc_svr=$(kubectl get svc cluster-manager-grpc-server -n open-cluster-management-hub -o jsonpath='{.status.loadBalancer.ingress[0].hostname}' --context ${CTX_HUB_CLUSTER})

# Extract the CA certificate

kubectl -n open-cluster-management-hub get configmaps ca-bundle-configmap -ojsonpath='{.data.ca-bundle\.crt}' --context ${CTX_HUB_CLUSTER} > grpc-svr-ca.pem

Then join the cluster with gRPC configuration:

clusteradm join \

--hub-token <your token data> \

--hub-apiserver <your hub-kube-api-url> \

--cluster-name "cluster1" \

--registration-auth grpc \

--grpc-server ${grpc_svr} \

--grpc-ca-file grpc-svr-ca.pem \

--context ${CTX_MANAGED_CLUSTER}

When using this approach, you can skip Step 3 below and proceed directly to Step 4: Accept the join request.

Option B: Bootstrap first, configure gRPC later

Bootstrap the klusterlet without gRPC configuration, then manually configure it afterwards:

clusteradm join \

--hub-token <your token data> \

--hub-apiserver https://hub-grpc.example.com \

--cluster-name "cluster1" \

--wait \

--force-internal-endpoint-lookup \

--context ${CTX_MANAGED_CLUSTER}

clusteradm join \

--hub-token <your token data> \

--hub-apiserver https://hub-grpc.example.com \

--cluster-name "cluster1" \

--wait \

--context ${CTX_MANAGED_CLUSTER}

Step 3: Configure the klusterlet to use gRPC driver (Option B only)

If you used Option A above, skip this step. If you used Option B, configure the klusterlet to use the gRPC registration driver:

kubectl edit klusterlet klusterlet --context ${CTX_MANAGED_CLUSTER}

Update the klusterlet configuration:

spec:

registrationConfiguration:

# Specify the gRPC registration driver

registrationDriver:

authType: grpc

The klusterlet will automatically restart and re-register using the gRPC connection.

Step 4: Accept the join request

On the hub cluster, accept the cluster registration request:

clusteradm accept --clusters cluster1 --context ${CTX_HUB_CLUSTER}

Step 5: Verify the registration

Verify that the managed cluster is registered successfully:

kubectl get managedcluster cluster1 --context ${CTX_HUB_CLUSTER}

You should see output similar to:

NAME HUB ACCEPTED MANAGED CLUSTER URLS JOINED AVAILABLE AGE

cluster1 true https://hub-grpc.example.com True True 2m

Check the klusterlet status on the managed cluster:

kubectl get klusterlet klusterlet -o yaml --context ${CTX_MANAGED_CLUSTER}

The klusterlet should show the gRPC registration driver in use:

spec:

registrationConfiguration:

registrationDriver:

authType: grpc

Check the klusterlet registration agent logs to verify gRPC connection:

kubectl logs -n open-cluster-management-agent deployment/klusterlet-registration-agent --context ${CTX_MANAGED_CLUSTER}

You should see log entries indicating successful gRPC connection to the hub.

Important limitations

Add-on compatibility: Currently, add-ons are not able to use the gRPC endpoint. Add-ons will continue to connect directly to the hub cluster’s Kubernetes API server even when the klusterlet is using gRPC-based registration. This means:

- You must ensure that add-ons can still reach the hub cluster’s Kubernetes API server

- The network requirements for add-ons remain unchanged from the standard registration model

- This limitation may be addressed in future versions of OCM

Configuration examples

Complete ClusterManager configuration with both CSR and gRPC drivers

Here’s an example that supports both traditional CSR-based and gRPC-based registration:

apiVersion: operator.open-cluster-management.io/v1

kind: ClusterManager

metadata:

name: cluster-manager

spec:

registrationConfiguration:

# Support both CSR (traditional) and gRPC registration

registrationDrivers:

# Keep CSR for backward compatibility

- authType: csr

# Add gRPC driver

- authType: grpc

grpc:

# Optional: auto-approve specific identities

autoApprovedIdentities:

- system:serviceaccount:open-cluster-management:cluster-bootstrap

serverConfiguration:

# Optionally specify a custom image

imagePullSpec: quay.io/open-cluster-management/registration:v0.15.0

endpointsExposure:

- protocol: grpc

usage: agentToHub

grpc:

type: loadBalancer

Klusterlet configuration

The klusterlet configuration is simple - just specify the gRPC auth type:

apiVersion: operator.open-cluster-management.io/v1

kind: Klusterlet

metadata:

name: klusterlet

spec:

clusterName: cluster1

registrationConfiguration:

registrationDriver:

authType: grpc

The gRPC endpoint information is automatically provided through the bootstrap secret - no additional endpoint configuration is needed in the Klusterlet spec.

Troubleshooting

gRPC server not running

If the gRPC server pod is not running on the hub:

# Check pod status

kubectl get pods -n open-cluster-management-hub --context ${CTX_HUB_CLUSTER}

# Check pod logs

kubectl logs -n open-cluster-management-hub deployment/cluster-manager-grpc-server --context ${CTX_HUB_CLUSTER}

# Verify ClusterManager configuration

kubectl get clustermanager cluster-manager -o yaml --context ${CTX_HUB_CLUSTER}

Common issues:

- Missing

registrationDriversfield withgrpcauthType inregistrationConfiguration - Missing

serverConfigurationsection - Missing or incorrect

endpointsExposureconfiguration - Ensure the

protocolfield is set togrpcinendpointsExposure

LoadBalancer not working

If the automatically created LoadBalancer is not working:

# Check LoadBalancer service (automatically created by cluster-manager operator)

kubectl describe svc cluster-manager-grpc-server -n open-cluster-management-hub --context ${CTX_HUB_CLUSTER}

# Verify the gRPC server service exists

kubectl get svc cluster-manager-grpc-server -n open-cluster-management-hub --context ${CTX_HUB_CLUSTER}

Verify that:

- The LoadBalancer service was automatically created by the cluster-manager operator (if not, check that

type: loadBalanceris set in the ClusterManager grpc configuration) - Your cloud provider supports LoadBalancer services and has allocated an external IP

- TLS certificates are valid and properly configured

- Network policies and firewalls allow traffic on port 443

Connection issues from managed cluster

If the managed cluster cannot connect to the gRPC server:

-

Verify network connectivity from the managed cluster:

# From a pod in the managed cluster or from a node curl -v -k https://hub-grpc.example.com -

Check klusterlet registration agent logs:

kubectl logs -n open-cluster-management-agent deployment/klusterlet-registration-agent --context ${CTX_MANAGED_CLUSTER} -

Verify the klusterlet configuration:

kubectl get klusterlet klusterlet -o jsonpath='{.spec.registrationConfiguration.registrationDriver.authType}' --context ${CTX_MANAGED_CLUSTER}Expected output:

grpc -

Check the bootstrap secret for gRPC configuration:

kubectl get secret bootstrap-hub-kubeconfig -n open-cluster-management-agent -o yaml --context ${CTX_MANAGED_CLUSTER}

TLS certificate issues

If you encounter TLS certificate validation errors:

-

The cluster manager automatically generates TLS certificates. You can view them with:

kubectl -n open-cluster-management-hub get cm ca-bundle-configmap -ojsonpath='{.data.ca-bundle\.crt}' kubectl -n open-cluster-management-hub get secrets signer-secret -ojsonpath="{.data.tls\.crt}" kubectl -n open-cluster-management-hub get secrets signer-secret -ojsonpath="{.data.tls\.key}"Note: Custom CA bundles are not currently supported.

-

Check certificate validity:

echo | openssl s_client -connect hub-grpc.example.com:443 2>/dev/null | openssl x509 -noout -dates -

Verify the certificate matches the hostname:

echo | openssl s_client -connect hub-grpc.example.com:443 2>/dev/null | openssl x509 -noout -text | grep DNS

Registration driver mismatch

If you see errors related to registration driver:

# Verify the registration driver is set to grpc

kubectl get klusterlet klusterlet -o jsonpath='{.spec.registrationConfiguration.registrationDriver.authType}' --context ${CTX_MANAGED_CLUSTER}

Expected output: grpc

If the driver is not set or incorrect:

kubectl edit klusterlet klusterlet --context ${CTX_MANAGED_CLUSTER}

And ensure:

spec:

registrationConfiguration:

registrationDriver:

authType: grpc

Add-on connection issues

Remember that add-ons cannot use the gRPC endpoint in the current version. If you experience add-on connection issues:

- Ensure that add-ons can still reach the hub cluster’s Kubernetes API server directly

- Verify network policies allow add-on traffic to the hub API server

- Check add-on agent logs for connection errors:

kubectl logs -n open-cluster-management-agent-addon <addon-pod-name> --context ${CTX_MANAGED_CLUSTER}

Next steps

- Learn about add-on management

- Explore ManifestWork for deploying resources to managed clusters

- Review the register a cluster documentation for standard API-based registration

2.5 - Running on EKS

Use this solution to use AWS EKS cluster as a hub. This solution uses AWS IAM roles for authentication, hence only Managed Clusters running on EKS will be able to use this solution.

Refer this article for detailed registration instructions.

3 - Add-ons and Integrations

Enhance the open-cluster-management core control plane with optional add-ons and integrations.

3.1 - Policy

The Policy Add-on enables auditing and enforcement of configuration across clusters managed by OCM, enhancing security, easing maintenance burdens, and increasing consistency across the clusters for your compliance and reliability requirements.

View the following sections to learn more about the Policy Add-on:

-

Policy framework

Learn about the architecture of the Policy Add-on that delivers policies defined on the hub cluster to the managed clusters and how to install and enable the add-on for your OCM clusters.

-

Policy API concepts

Learn about the APIs that the Policy Add-on uses and how the APIs are related to one another to deliver policies to the clusters managed by OCM.

-

Supported managed cluster policy engines

-

Configuration policy

The

ConfigurationPolicyis provided by OCM and defines Kubernetes manifests to compare with objects that currently exist on the cluster. The action that theConfigurationPolicywill take is determined by itscomplianceType. Compliance types includemusthave,mustnothave, andmustonlyhave.musthavemeans the object should have the listed keys and values as a subset of the larger object.mustnothavemeans an object matching the listed keys and values should not exist.mustonlyhaveensures objects only exist with the keys and values exactly as defined. -

Open Policy Agent Gatekeeper

Gatekeeper is a validating webhook with auditing capabilities that can enforce custom resource definition-based policies that are run with the Open Policy Agent (OPA). Gatekeeper

ConstraintTemplatesand constraints can be provided in an OCMPolicyto sync to managed clusters that have Gatekeeper installed on them.

-

3.1.1 - Policy framework

The policy framework provides governance capabilities to OCM managed Kubernetes clusters. Policies provide visibility and drive remediation for various security and configuration aspects to help IT administrators meet their requirements.

API Concepts

View the Policy API page for additional details about the Policy API managed by the Policy Framework components, including:

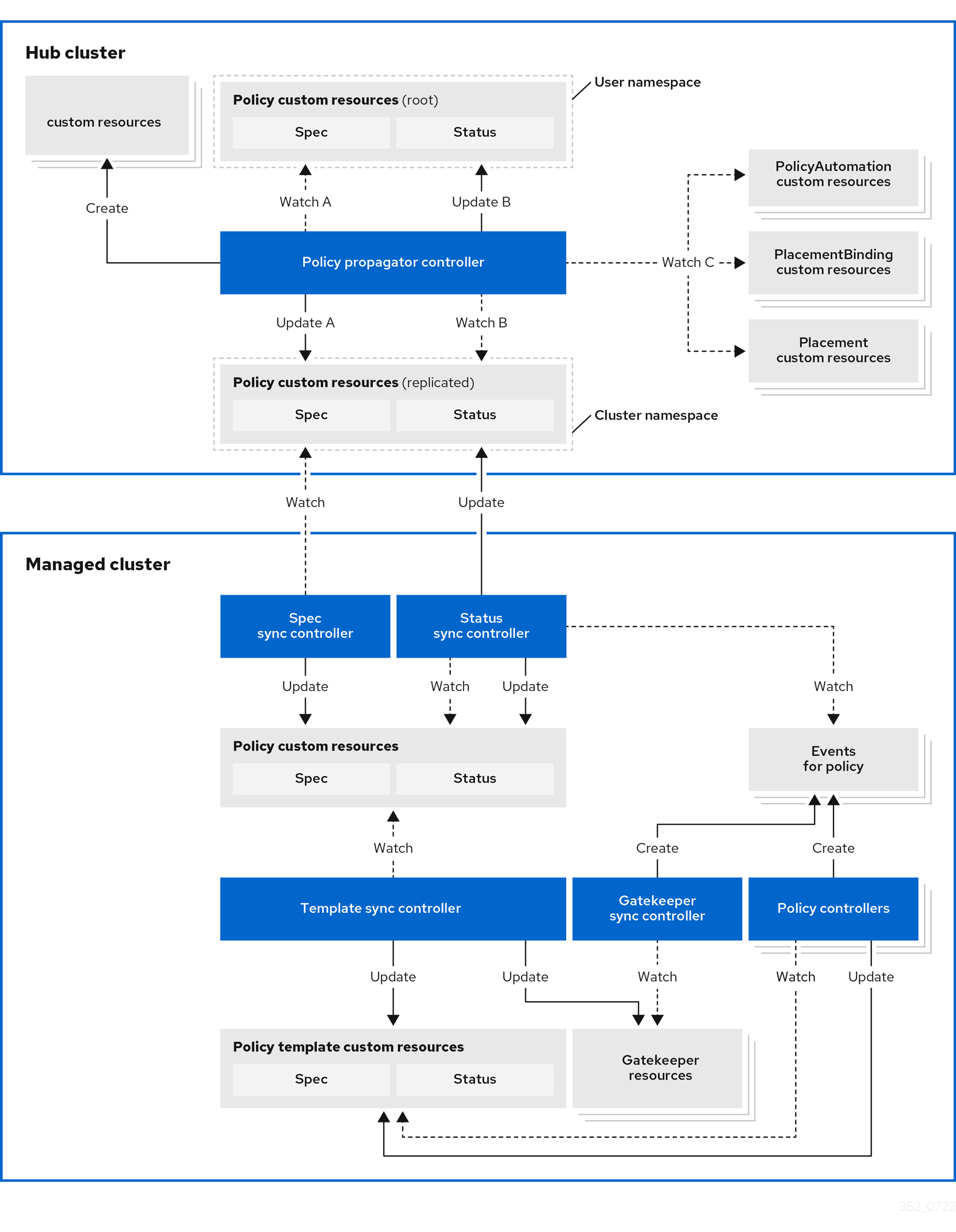

Architecture

The governance policy framework distributes policies to managed clusters and collects results to send back to the hub cluster.

Prerequisite

You must meet the following prerequisites to install the policy framework:

-

Ensure the

open-cluster-managementcluster manager is installed. See Start the control plane for more information. -

Ensure the

open-cluster-managementklusterlet is installed. See Register a cluster for more information. -

If you are using

PlacementRuleswith your policies, ensure theopen-cluster-managementapplication is installed . See Application management for more information. If you are using the defaultPlacementAPI, you can skip the Application management installation, but you do need to install thePlacementRuleCRD with this command:kubectl apply -f https://raw.githubusercontent.com/open-cluster-management-io/multicloud-operators-subscription/main/deploy/hub-common/apps.open-cluster-management.io_placementrules_crd.yaml

Install the governance-policy-framework hub components

Install via Clusteradm CLI

Ensure clusteradm CLI is installed and is at least v0.3.0. Download and extract the

clusteradm binary. For more details see the

clusteradm GitHub page.

-

Deploy the policy framework controllers to the hub cluster:

# The context name of the clusters in your kubeconfig # If the clusters are created by KinD, then the context name will the follow the pattern "kind-<cluster name>". export CTX_HUB_CLUSTER=<your hub cluster context> # export CTX_HUB_CLUSTER=kind-hub export CTX_MANAGED_CLUSTER=<your managed cluster context> # export CTX_MANAGED_CLUSTER=kind-cluster1 # Set the deployment namespace export HUB_NAMESPACE="open-cluster-management" # Deploy the policy framework hub controllers clusteradm install hub-addon --names governance-policy-framework --context ${CTX_HUB_CLUSTER} -

Ensure the pods are running on the hub with the following command:

$ kubectl get pods -n ${HUB_NAMESPACE} NAME READY STATUS RESTARTS AGE governance-policy-addon-controller-bc78cbcb4-529c2 1/1 Running 0 94s governance-policy-propagator-8c77f7f5f-kthvh 1/1 Running 0 94s- See more about the governance-policy-framework components:

Deploy the synchronization components to the managed cluster(s)

Deploy via Clusteradm CLI

-

To deploy the synchronization components to a self-managed hub cluster:

clusteradm addon enable --names governance-policy-framework --clusters <managed_hub_cluster_name> --annotate addon.open-cluster-management.io/on-multicluster-hub=true --context ${CTX_HUB_CLUSTER}To deploy the synchronization components to a managed cluster:

clusteradm addon enable --names governance-policy-framework --clusters <cluster_name> --context ${CTX_HUB_CLUSTER} -

Verify that the governance-policy-framework-addon controller pod is running on the managed cluster with the following command:

$ kubectl get pods -n open-cluster-management-agent-addon NAME READY STATUS RESTARTS AGE governance-policy-framework-addon-57579b7c-652zj 1/1 Running 0 87s

What is next

Install the policy controllers to the managed clusters.

3.1.2 - Policy API concepts

Overview

The policy framework has the following API concepts:

- Policy Templates are the policies that perform a desired check or action on a managed cluster. For

example,

ConfigurationPolicy

objects are embedded in

Policyobjects under thepolicy-templatesarray. - A

Policyis a grouping mechanism for Policy Templates and is the smallest deployable unit on the hub cluster. Embedded Policy Templates are distributed to applicable managed clusters and acted upon by the appropriate policy controller. - A

PolicySetis a grouping mechanism ofPolicyobjects. Compliance of all groupedPolicyobjects is summarized in thePolicySet. APolicySetis a deployable unit and its distribution is controlled by a Placement. - A

PlacementBindingbinds a Placement to aPolicyorPolicySet.

Additional resources:

- View the following resources to learn more about the Policy Addon:

Policy

A Policy is a grouping mechanism for Policy Templates and is the smallest deployable unit on the hub cluster.

Embedded Policy Templates are distributed to applicable managed clusters and acted upon by the appropriate

policy controller. The compliance state and status of a Policy

represents all embedded Policy Templates in the Policy. The distribution of Policy objects is controlled by a

Placement.

View a simple example of a Policy that embeds a ConfigurationPolicy policy template to manage a namespace called

“prod”.

apiVersion: policy.open-cluster-management.io/v1

kind: Policy

metadata:

name: policy-namespace

namespace: policies

annotations:

policy.open-cluster-management.io/standards: NIST SP 800-53

policy.open-cluster-management.io/categories: CM Configuration Management

policy.open-cluster-management.io/controls: CM-2 Baseline Configuration

spec:

remediationAction: enforce

disabled: false

policy-templates:

- objectDefinition:

apiVersion: policy.open-cluster-management.io/v1

kind: ConfigurationPolicy

metadata:

name: policy-namespace-example

spec:

remediationAction: inform

severity: low

object-templates:

- complianceType: musthave

objectDefinition:

kind: Namespace # must have namespace 'prod'

apiVersion: v1

metadata:

name: prod

The annotations are standard annotations for informational purposes and can be used by user interfaces, custom report

scripts, or components that integrate with OCM.

The optional spec.remediationAction field dictates whether the policy controller should inform or enforce when

violations are found and overrides the remediationAction field on each policy template. When set to inform, the

Policy will become noncompliant if the underlying policy templates detect that the desired state is not met. When set

to enforce, the policy controller applies the desired state when necessary and feasible.

The policy-templates array contains an array of Policy Templates. Here a

single ConfigurationPolicy called policy-namespace-example defines a Namespace manifest to compare with objects on

the cluster. It has the remediationAction set to inform but it is overridden by the optional global

spec.remediationAction. The severity is for informational purposes similar to the annotations.

Inside of the embedded ConfigurationPolicy, the object-templates section describes the prod Namespace object

that the ConfigurationPolicy applies to. The action that the ConfigurationPolicy will take is determined by the

complianceType. In this case, it is set to musthave which means the prod Namespace object will be created if it

doesn’t exist. Other compliance types include mustnothave and mustonlyhave. mustnothave would delete the prod

Namespace object. mustonlyhave would ensure the prod Namespace object only exists with the fields defined in the

ConfigurationPolicy. See the

ConfigurationPolicy page for more information

or see the templating in configuration policies topic for advanced templating

use cases with ConfigurationPolicy.

When the Policy is bound to a Placement using a PlacementBinding, the

Policy status will report on each cluster that matches the bound Placement:

status:

compliant: Compliant

placement:

- placement: placement-hub-cluster

placementBinding: binding-policy-namespace

status:

- clustername: local-cluster

clusternamespace: local-cluster

compliant: Compliant

To fully explore the Policy API, run the following command:

kubectl get crd policies.policy.open-cluster-management.io -o yaml

To fully explore the ConfigurationPolicy API, run the following command:

kubectl get crd configurationpolicies.policy.open-cluster-management.io -o yaml

PlacementBinding

A PlacementBinding binds a Placement to a Policy or PolicySet.

Below is an example of a PlacementBinding that binds the policy-namespace Policy to the placement-hub-cluster

Placement.

apiVersion: policy.open-cluster-management.io/v1

kind: PlacementBinding

metadata:

name: binding-policy-namespace

namespace: policies

placementRef:

apiGroup: cluster.open-cluster-management.io

kind: Placement

name: placement-hub-cluster

subjects:

- apiGroup: policy.open-cluster-management.io

kind: Policy

name: policy-namespace

Once the Policy is bound, it will be distributed to and acted upon by the managed clusters that match the Placement.

PolicySet

A PolicySet is a grouping mechanism of Policy objects. Compliance of all grouped Policy objects is

summarized in the PolicySet. A PolicySet is a deployable unit and its distribution is controlled by a

Placement when bound through a PlacementBinding.

This enables a workflow where subject matter experts write Policy objects and then an IT administrator creates a

PolicySet that groups the previously written Policy objects and binds the PolicySet to a Placement that deploys

the PolicySet.

An example of a PolicySet is shown below.

apiVersion: policy.open-cluster-management.io/v1beta1

kind: PolicySet

metadata:

name: ocm-hardening

namespace: policies

spec:

description: Apply standard best practices for hardening your Open Cluster Management installation.

policies:

- policy-check-backups

- policy-managedclusteraddon-available

- policy-subscriptions

Managed cluster policy controllers

The Policy on the hub delivers the policies defined in spec.policy-templates to the managed clusters via

the policy framework controllers. Once on the managed cluster, these Policy Templates are acted upon by the associated

controller on the managed cluster. The policy framework supports delivering the Policy Template kinds listed here:

-

Configuration policy

The

ConfigurationPolicyis provided by OCM and defines Kubernetes manifests to compare with objects that currently exist on the cluster. The action that theConfigurationPolicywill take is determined by itscomplianceType. Compliance types includemusthave,mustnothave, andmustonlyhave.musthavemeans the object should have the listed keys and values as a subset of the larger object.mustnothavemeans an object matching the listed keys and values should not exist.mustonlyhaveensures objects only exist with the keys and values exactly as defined. See the page on Configuration Policy for more information. -

Open Policy Agent Gatekeeper

Gatekeeper is a validating webhook with auditing capabilities that can enforce custom resource definition-based policies that are run with the Open Policy Agent (OPA). Gatekeeper

ConstraintTemplatesand constraints can be provided in an OCMPolicyto sync to managed clusters that have Gatekeeper installed on them. See the page on Gatekeeper integration for more information.

Templating in configuration policies

Configuration policies support the inclusion of Golang text templates in the object definitions. These templates are resolved at runtime either on the hub cluster or the target managed cluster using configurations related to that cluster. This gives you the ability to define configuration policies with dynamic content and to inform or enforce Kubernetes resources that are customized to the target cluster.

The template syntax must follow the Golang template language specification, and the resource definition generated from the resolved template must be a valid YAML. (See the Golang documentation about package templates for more information.) Any errors in template validation appear as policy violations. When you use a custom template function, the values are replaced at runtime.

Template functions, such as resource-specific and generic lookup template functions, are available for referencing

Kubernetes resources on the hub cluster (using the {{hub ... hub}} delimiters), or managed cluster (using the

{{ ... }} delimiters). See the Hub cluster templates section for more details. The

resource-specific functions are used for convenience and makes content of the resources more accessible. If you use the

generic function, lookup, which is more advanced, it is best to be familiar with the YAML structure of the resource

that is being looked up. In addition to these functions, utility functions like base64encode, base64decode,

indent, autoindent, toInt, and toBool are also available.

To conform templates with YAML syntax, templates must be set in the policy resource as strings using quotes or a block

character (| or >). This causes the resolved template value to also be a string. To override this, consider using